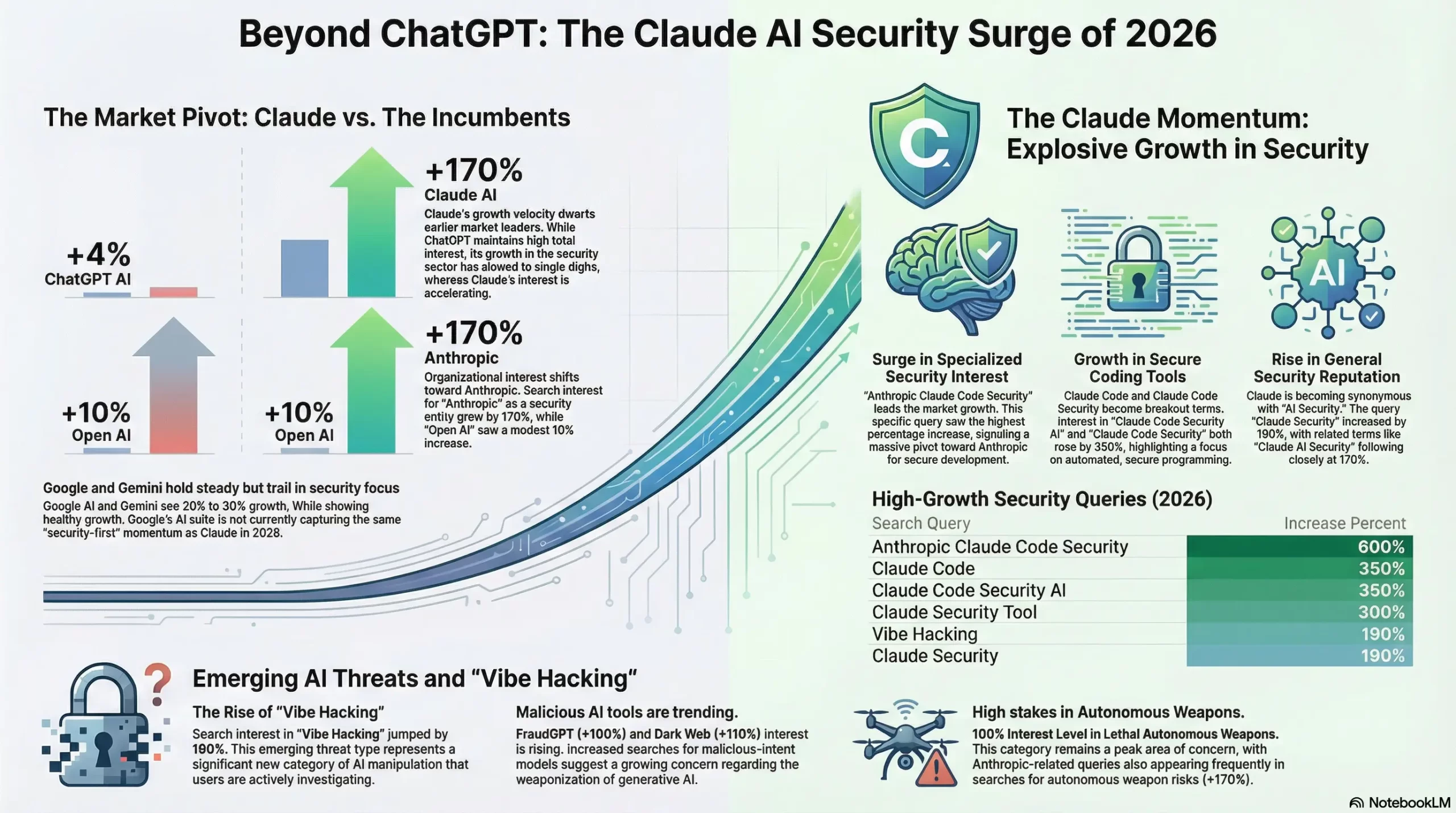

Anthropic’s Constitutional AI architecture isn’t just a philosophical curiosity — it’s becoming the enterprise gold standard for safe, intelligent deployment. Here’s why the industry is taking notice.

Something fundamental changed in the AI landscape over the last twelve months. Not gradually — but with the kind of irreversible shift you only recognize clearly in retrospect. The question enterprise technology leaders are now asking isn’t “should we adopt AI?” It’s a sharper, more urgent question: “Which AI can we actually trust?” And that single question is reshaping the competitive dynamic between the two giants of the space — OpenAI’s ChatGPT and Anthropic’s Claude — in ways that nobody fully predicted.

Claude, built by Anthropic — a company founded in 2021 by former OpenAI researchers Dario and Daniela Amodei — has spent the past three years developing something its competitors largely treat as secondary: a principled, rigorous approach to AI safety that isn’t a constraint on capability, but an expression of it. In 2026, that approach is paying dividends at an extraordinary scale.

Let’s be clear about one thing before we go further. This isn’t a takedown piece. ChatGPT remains, by many measures, the most widely used AI assistant on the planet. It is genuinely excellent at what it does. But excellence in a specific set of capabilities and trustworthiness as a foundation for sensitive, high-stakes workflows are two different things — and the industry is increasingly demanding both simultaneously. Claude has made the latter its defining competitive advantage.

🛡️ What Is Claude AI, and Why Does Security Matter?

Claude is a large language model — and increasingly, a suite of AI-powered tools — developed by Anthropic. The company was founded explicitly around the thesis that building safe AI and building capable AI are not trade-offs. That thesis, which seemed almost quixotic in 2021 when AI capability was the sole measure of progress, now reads as prescient.

The technical foundation of Claude’s safety architecture is something Anthropic calls Constitutional AI. Rather than relying solely on human feedback to train Claude’s behavior, Anthropic equipped the model with a set of explicit principles — a “constitution” — that guides how Claude reasons about its own responses. The model is trained to critique and revise its outputs according to these principles before delivering an answer. This isn’t a filter. It’s reasoning embedded in the architecture itself.

Constitutional AI is Anthropic’s training methodology in which Claude uses a written set of principles to self-critique its outputs during training. Rather than waiting for human reviewers to flag harmful content, the model learns to identify and correct problematic reasoning internally — producing a model that is resistant to manipulation at a foundational level.

The practical security benefits of this approach are significant and measurable. Claude is certified at SOC 2 TYPE 2 and offers HIPAA COMPLIANCE for enterprise plans — making it suitable for financial services, healthcare, legal, and government deployments. According to Walturn’s independent analysis, Claude is approximately 10 times more resistant to jailbreaks and misuse attempts than leading competitors. In an era where prompt injection attacks are an active area of adversarial research, that number matters enormously.

Claude has the strictest security protocols of any major AI platform. For sensitive business data, it remains the defensible enterprise choice.

— Clickforest AI Tools Comparison 2026 (Source)🧠 How Does Claude Think Differently From ChatGPT?

Here’s where the philosophical dimension becomes genuinely interesting — and practically important. Claude and ChatGPT represent different bets about what an AI assistant fundamentally is.

OpenAI, historically, has optimized for capability breadth and user satisfaction. ChatGPT is astonishingly versatile. It generates images, processes audio, creates video clips, integrates with hundreds of third-party tools, and is remarkably agreeable in conversation. It tends, as the independent comparison site Zemith noted, to “just do the thing you asked without questioning it.” For many users, that’s a feature, not a bug.

Anthropic made a different bet. Claude is designed to be what some researchers call a “careful reasoner” — a system that thinks more slowly and more explicitly about the implications of a request before responding. It will sometimes push back. It will occasionally offer an alternative framing. It will flag when it’s uncertain. In an enterprise context, where the cost of a hallucinated financial analysis or a mishandled legal document is measured in real money and real liability, those qualities aren’t obstacles. They’re precisely what you want.

The 365 Mechanix AI comparison, published just two days ago, put it well: Claude has leaned into a specific identity — “careful reasoning” — that “manifests as coherence across large documents, structured analysis and a tone that many teams interpret as deliberate rather than conversational.” It’s the difference between a tool that does what you say and a system that understands what you mean.

Claude’s context window extends to 200,000 tokens on standard paid plans, with 1 million tokens available via API — allowing it to process an entire book manuscript, codebase, or multi-year document archive in a single prompt without losing coherence.

⚙️ Claude Code Security: A New Frontier in AI-Powered Cybersecurity

Perhaps the most striking signal of Claude’s security positioning in 2026 is the emergence of Claude Code Security — a recently launched feature that scans production codebases to find and patch complex vulnerabilities. According to a detailed analysis published on the DEV Community just 24 hours ago, it “reads code like a human security researcher, tracing data flows rather than just matching known threat patterns.”

This distinction is critical. Traditional static analysis tools work by pattern-matching against known vulnerability signatures. They’re good at finding yesterday’s problems. Claude Code Security reasons about code semantically — it understands the intent of a function, traces how data flows through a system, and identifies vulnerabilities that don’t match any existing signature. That’s a meaningfully different capability.

The tool also excels at legacy modernization — deciphering and modernizing legacy languages like COBOL, which remains stubbornly embedded in financial and government infrastructure. For organizations navigating the intersection of old systems and new threats, this is not a minor feature. It’s a strategic capability.

According to IntuitionLabs’ February 2026 enterprise guide, major security companies including HackerOne have already adopted Claude for enterprise-level security workflows — one of the first independent validations of Claude’s utility in high-stakes cybersecurity contexts.

Claude vs ChatGPT — Head-to-Head, 2026

Feature-level comparison · Data sourced from Zemith, IntuitionLabs & 365 Mechanix · Updated Feb 2026

Sources: Zemith 2026 · IntuitionLabs · 365 Mechanix

2026 AI Performance Benchmarks

Independent scores across key enterprise capability areas

Coding Accuracy (30-Day Independent Test)

Terminal-Bench Enterprise Score (Opus 4.6)

Long-Form Writing Structure Score (2026 Essay Benchmark)

Sources: Zemith.com · IntuitionLabs.ai · Logicweb.com

Claude’s 6 Security Pillars in 2026

How Anthropic has built the most security-focused AI platform currently available to enterprise teams.

Who Should Use Claude in 2026?

For Developers: Claude as an Intelligent Pair Programmer

If you work in a terminal-native, Git-first environment managing large repositories, Claude Code is arguably the most capable AI coding assistant available in 2026. Its ability to analyze codebases exceeding 500,000 lines of code with coherent long-context reasoning puts it in a different category from tools optimized for single-function speed.

The SWE-Bench performance gap is real and practically significant. Claude consistently produces cleaner outputs, better error recovery, and more coherent multi-file logic. One developer writing on the DEV Community described it as “pair-programming with a disciplined senior engineer.” Claude Code Security additionally gives security-focused developers a semantic vulnerability scanner that reasons about intent rather than matching patterns — a genuine leap forward from traditional SAST tooling.

The TechTimes comparison published last week concluded that Claude Code works best in terminal-heavy, Git-native environments handling legacy monoliths and 500k+ line codebases with detailed reasoning.

For Enterprise Teams: Security, Compliance, and Reasoning at Scale

Claude Enterprise was explicitly designed for teams where the cost of an error is measured in legal liability, customer trust, or regulatory consequence. It supports SSO, role-based access control, audit logging, and the ability to ingest proprietary knowledge bases — allowing Claude to query internal documentation, policies, and institutional knowledge as fluently as it handles external data.

The IntuitionLabs 2026 enterprise guide notes that major financial firms including NBIM and IG Group, as well as security companies including HackerOne, have already adopted Claude for mission-critical workflows. That list is significant: these are organizations with extreme risk tolerance requirements. Their adoption is a form of institutional endorsement that speaks louder than any benchmark.

Companies increasingly route different tasks to different models — and for document-heavy, compliance-sensitive workflows, Claude has become the default choice. As DigitalOcean’s analysis notes, Claude Enterprise emphasizes data control, compliance APIs, and very large context windows for document-heavy teams.

For Finance & Legal: Low Hallucination Rates and Accurate Reasoning

In finance and legal contexts, hallucination is not a minor inconvenience. A fabricated case citation, a made-up financial figure, or a misrepresented regulatory requirement can have serious consequences. Claude’s low hallucination rate — a direct product of its Constitutional AI training methodology — makes it the safer choice for any workflow where factual precision is non-negotiable.

Claude Opus 4.6 specifically outperforms all competitors on legal and financial task benchmarks according to IntuitionLabs’ enterprise testing. The combination of large context windows, structured reasoning, and compliance certifications makes it uniquely positioned for due diligence reviews, contract analysis, financial modeling commentary, and regulatory research.

The fact that Claude Enterprise can be fed an organization’s proprietary knowledge — internal policy documents, deal history, case archives — and query it fluently also means it can function as an institutionally grounded assistant rather than a general-purpose tool working from external knowledge alone.

For Researchers: Deep Reasoning and Intellectual Integrity

Academic and scientific researchers have particular needs that the AI assistant market has historically served poorly: intellectual honesty, epistemic humility, willingness to express uncertainty, and the capacity to reason about nuanced or contested claims without defaulting to false confidence. Claude’s training philosophy aligns with those requirements in ways that make it meaningfully different from systems optimized for user satisfaction.

Claude will push back. It will flag when it’s uncertain. It will offer alternative framings and acknowledge the limits of its knowledge. In a research context, those aren’t limitations — they’re precisely the qualities that make an AI assistant trustworthy as an intellectual collaborator.

The 1 million token context window available via API also enables research workflows that were previously impossible: feeding an entire dissertation, a full dataset, or years of published papers into a single coherent analysis session.

The Evolution of Claude: From Safety Lab to Enterprise Standard

📋 Enterprise Security Compliance Matrix

One of the most practically important dimensions of the Claude vs ChatGPT debate for enterprise IT and security leaders is the compliance landscape. Here’s how the major platforms compare across the certifications and controls that matter most in regulated industries.

| Certification / Control | Claude Enterprise | ChatGPT Enterprise |

|---|---|---|

| SOC 2 Type 2 | ✅ Full certification | ✅ Full certification |

| HIPAA Compliance Option | ✅ Available on Enterprise | ✅ Available on Enterprise |

| GDPR Compliance | ✅ Full compliance | ✅ Full compliance |

| Proprietary Data Not Used for Training | ✅ Guaranteed | ✅ Guaranteed (Enterprise) |

| Jailbreak Resistance | ✅ 10× more resistant (Constitutional AI) | ○ Standard filtering approach |

| Private Knowledge Base Ingestion | ✅ Native Claude Enterprise feature | ✅ Via integrations |

| Role-Based Access Control (RBAC) | ✅ | ✅ |

| Single Sign-On (SSO) | ✅ | ✅ |

| Copyright Indemnity | ✅ Paid commercial services | ○ Limited |

| Third-Party App Ecosystem | ○ Growing, limited native integrations | ✅ Hundreds of integrations |

Sources: DigitalOcean · Walturn · IntuitionLabs

📡 The Bigger Picture: Why AI Security Is the New Competitive Frontier

Step back from the feature comparisons for a moment and consider the macro context. We are now three years into the mainstream AI adoption cycle. The first wave was characterized by fascination — people discovering what these systems could do. The second wave was characterized by experimentation — organizations piloting AI in non-critical workflows. The wave we’re entering now is categorically different: enterprises are integrating AI into core operational infrastructure, and that changes the risk calculus entirely.

When AI is processing your legal contracts, analyzing your financial data, contributing to your security architecture, and interacting with your customers, the cost of a failure is no longer an embarrassing hallucination in a demo. It’s a data breach, a compliance violation, a material misstatement, or a security incident. At that level of stakes, the AI platform you choose is a risk management decision, not just a productivity decision.

This is the structural tailwind behind Claude’s growing enterprise adoption. Anthropic made the investment in Constitutional AI, SOC 2 certification, HIPAA compliance, and adversarial resistance years before these became the differentiating factors in enterprise procurement. That foresight is now a competitive moat.

The question enterprise technology leaders are now asking isn’t “which AI is smartest?” It’s “which AI can I put in front of my most sensitive workflows?” Those are increasingly different questions with different answers.

— AI Insights Editorial Analysis · February 2026There’s also a philosophical argument worth making explicitly, because it has practical implications. The AI safety movement — which Anthropic represents most visibly — is not fundamentally about limiting what AI can do. It’s about ensuring that what AI does is aligned with what humans actually intend and need. A model that refuses to be jailbroken isn’t less capable than one that can be manipulated into harmful outputs. It’s more trustworthy. And in enterprise contexts, trustworthiness is capability.

🔮 What Comes Next: The Agentic AI Security Challenge

The next frontier in AI security isn’t the model — it’s the agent. As Claude Code and Claude Cowork move toward autonomous operation — executing tasks, modifying files, sending communications, interacting with external systems — the security surface area expands dramatically. An AI assistant that can be prompted into bad behavior is a risk. An AI agent that can be prompted into bad behavior while autonomously executing system-level tasks is a categorically more serious one.

Anthropic’s Constitutional AI architecture is designed with this trajectory in mind. The same principles that govern how Claude responds to a harmful prompt also govern how it reasons about whether to execute a potentially dangerous agentic action. Claude Code Security’s semantic code analysis — reasoning about intent and data flow rather than matching patterns — is an early expression of how this architecture scales to autonomous operation.

The DEV Community analysis published just this week noted that “the era of conversational AI chatbots is officially giving way to the era of agentic AI — systems that don’t just talk to you, but actually do the work for you.” Claude’s safety architecture was built for this moment. It is not a retrofit.

The AI arms race is real, the pace of change is unprecedented — and choosing the right architectural foundation now will determine which organizations can safely scale their AI investments in the years ahead. Claude’s bet is that foundation is safety-first reasoning. In 2026, the evidence suggests that bet is paying off.

Trending Questions — Answered

Most searched queries about Claude AI security, answered with verified data

Ready to Explore Claude AI for Your Organization?

Start with the free tier, or read Anthropic’s official documentation to understand how Claude Enterprise can integrate with your existing security and compliance framework.